Introduction

Picking up the thread from our previous blog post, we intend to give you a more detailed insight into Archivum’s unique features. One of them is bringing the dynamic flexibility of storage tiering from an infrastructural level to an applicational one. To cut a long story short: In this entry to the series, I’ll focus on how cold storage is utilized within our archiving solution.

Stay cool, save big!

Before I get to the core of this provocative caption, we need a little bit more background: In recent years all major cloud providers developed their own multi-tier cloud storage options. The main focus of their ongoing competition is based on trade-offs between the stored data’s availability and its cost of persistence. Today, most cloud providers offer various configurations of storage tiers, each with their own optimized compromises of the two focus areas, made suitable for different use cases.

Just as the nomenclature suggests, ‘cold’ storage stands in sharp contrast to ‘hot’ storage. If we define hot storage as data at hand, cold storage means data at rest. Technically speaking, the main differences between the storages are twofold: Firstly there is a distinction to be made in terms of throughput. Hot storages often utilize solid-state drives, which enjoy quicker access times and lower latency, while cold storages mostly use hard disk drives, which are more durable but have higher latency by comparison. The second big difference is concerning network connectivity: Hot storages are highly available online storages, whereas cold storages have a way lower availability or are even completely offline.

Both of the above aspects, high hardware throughput and network overheads, result in much higher price tags for hot storage. Given the lower cost per capacity of cold storage, you can already save a lot of expense by cleverly allocating data to the appropriate storage tiers. In addition to this, our feature-rich cloud-native application Archivum effectively enables an even more economical usage of resources by provisioning lifecycles for multiple kinds of data in an organization’s pipeline. By fine-tuning the storage tier based on the lifecycle of each data pipeline, storage costs are minimized even further. Exactly this is how we are able to save organizations heaps of money!

Now that you’re familiar with the underlying rationale, you may wonder how we managed to pull all this off. In the next part, I’ll go over the providers and services it takes to accomplish such an intricate feature.

The tech behind it all

Back to basics

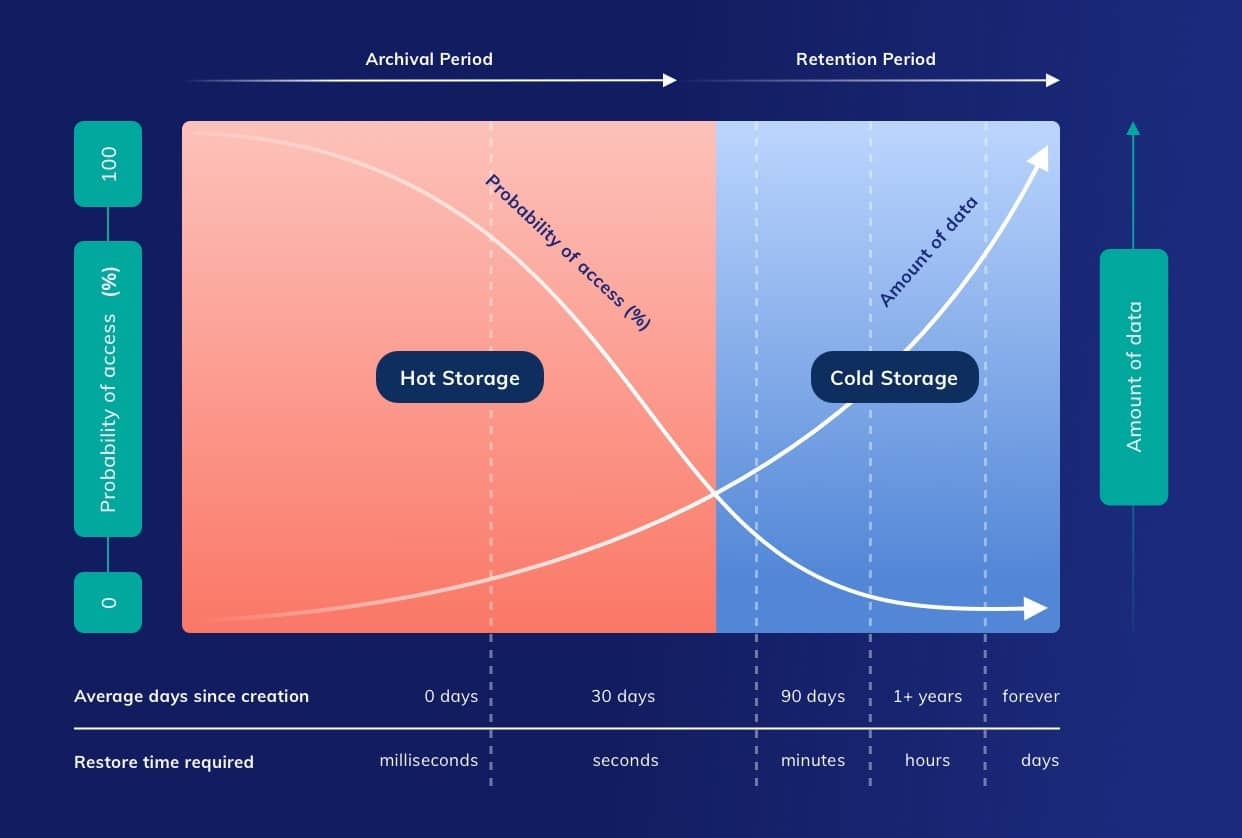

Let’s start off with a quick reminder of Archivum’s fundamental operating principles: Within the application, files are grouped in user-defined containers. A file’s lifecycle is initiated by its creation inside one of these containers. After that, there is a period of time in which the file needs to be available for downloading, updating and sharing by users. We refer to this timeframe as the ‘Archival Period’. During the said period, data is stored on hot storage, meaning that changing, tagging, and multiple other actions concerning the file’s content are wholly possible. Following this, the second interval is labeled ‘Retention Period’. During it, documents are archived on cold storage and are, as previously alluded to, not immediately available for download and cannot be updated anymore. At the end of the lifecycle the retained data, having served its purpose, is automatically deleted by our nifty system.

In summary: Archivum will automatically reallocate hot-stored data into cold storage after the Archival Period and dispose of it after the Retention Period. Users are able to define both periods for each individual container.

Selecting a provider

Since a number of Archivum’s benefits rely on storage tiering on an infrastructural level, it is worth adding a few words about our choice of provider. Ultimately, we went with Azure storage and there are a couple of reasons for it. A comprehensive side-by-side comparison of all the different providers surely is beyond the scope of this blog post, so I’ll only touch on the main deciding factors influencing our choice:

- First of all, Archivum is designed for archiving very large amounts of data of whole organizations, not just of a single user. Tiny variances in pricing can turn into big figures when taking the sheer amount of data into account that is going to be archived over months and years. In this regard, Azure provides the best prices per archiving tier.

- The second reason is a somewhat technical one: Azure offers restoring archived data on the fly (Rehydrate blob data while copying) as opposed to tiering on a container or even on storage account level. This mechanism provides us with an advantageous level of dynamism (easily moving files between different tiers within Archivum itself) and significantly improves the efficiency of handling operations within a single storage account. However, I should point out that it’s certainly not impossible to do this kind of tiering utilizing other providers. In our particular case, Azure is the most straightforward option and did not require any tedious workarounds.

Managing lifecycles

Now that the selection of cloud services is out of the way, another technical aspect worthy of mentioning is how we dealt with all the schedulings regarding data’s lifecycles. Scheduled tasks require careful consideration because many of the storage operations performed are of an asynchronous nature. For example:

- Archiving a file when it is due to be archived

- Deleting a file after Retention Period

- Restoring an archived file upon request

- Notifying the user whenever the file is restored

Put simply, all the aforementioned tasks are scheduled to be executed at a later point in time. While implementing a scheduling logic to handle them is not very cumbersome in itself, it can become quite a daunting task in a multiple service instances setup. Our approach to overcome this challenge was to employ Kubernetes CronJobs.

As an application, Archivum is expected to be used by many users across an organization and beyond, so using Kubernetes to scale up our services is quite beneficial already. But there is a problem: Implementing scheduled tasks across multiple instances has known issues. Confining the scheduling logic inside a specific instance is not suitable, since instances may be deleted or created dynamically at a later point in time. Subsequently, the scheduling logic has to be placed externally to services and CronJobs serves this purpose very well. In the end, Kubernetes is used not only for scaling but also for scheduling, with its massive capabilities being put into action in Archivum’s backend!

Impactful applications

As we’ve noted a few times already, the smart allocation between hot and cold storage enables companies, businesses, and other organizations to start facing their exponential data growths and flatten cost curves. Obviously, there are multiple different use cases benefitting from Archivum’s way of utilizing storage tiers, but to keep the length of this blog post within reason, I would just like to briefly highlight three of them:

- Daily business: Archivum can be used to actively store and share day-to-day files, while the rest of the lifecycle is happening passively in the background. Archiving and restoring processes are automated entirely. From the end user’s perspective, all tiering is unified as if one is interacting with singular file storage.

- Regulatory archives: For organizations there usually are certain types of documents, which are required to be preserved for a period of time. For compliance reasons, mandatory retentions can range from a few years to decades. One can easily make use of Archivum and integrate it into existing workflows to meet these demands in a cost-efficient manner.

- Disaster recovery: A trending approach is to safeguard against any kind of temporary or permanent data center failures. Oftentimes this goes along with storing redundant copies of files in different locations. Based on this notion, Archivum can function as a backup and recovery solution. Even if the original data is stored on hot storage, backup data can be moved to cold storage and be restored in case of occurring mainline malfunctions.

Closing Thoughts

Generally speaking, I believe the advantageous utilization of cold storage is a very relevant topic. As digital transformation takes hold of more and more aspects of corporate life, managing everything in an efficient way, both in terms of cost and automation, becomes essential.

I’d like to end this blog post with a glimpse of an emerging use case: Slowly but surely, we are entering the age of artificial intelligence (AI) and machine learning (ML). Lately, the importance of single bits of data is becoming a lot more obvious. Especially in the light of novel algorithms, data that previously seemed useless has gained substantial value. There already exist very powerful tools to train models for many diverse tasks – and their bottleneck has mostly been a lack of training data. Therefore, companies with the aim of taking advantage of AI/ML innovations in the future are urged to anticipate future significances and retain any data whatsoever in a structured manner. All this potentially precious training data has to be kept somewhere, even if it won’t be accessed any time soon.

To conclude this train of thought: One of Archivum’s additional strong points may be offering a way of piling up training data into inexpensive cold storages instead of having to retain it at a high price or outright deleting everything.

—

And that’s it! I hope you enjoyed the read and gained some new insights. Don’t forget: There are more posts like this to come! 🙂

Mahoor

Mahoor is Head of Cloud-Native Engineering at MobiLab.