TL;DR

Estimates done by software engineers are in most cases still superior to other methods. With sufficient information, they can be accurate enough to be useful. Expect some over-optimism and overconfidence. People normally estimate the most likely effort needed for a task. Summing up such estimates results in too low overall estimates. Care should be taken to avoid psychological pitfalls such as anchoring, which can severely distort intuitive judgment.

Disclaimer

A substantial amount of this post is based on the excellent and free e-book Time Predictions – Understanding and Avoiding Unrealism in Project Planning and Everyday Life by Torleif Halkjelsvik and Magne Jørgensen. I highly recommend their work to anyone who has to deal with project planning.

Intro

I have vivid memories about the first time I was forced to estimate how much it would take me to code up something. I had to justify my actions, if, for example, a task took me 3.5 hours, instead of 2.5 hours. While my senior colleagues treated this process as a minor nuisance, I was furious. How should I be able to tell how much time a complex task takes in an uncertain environment, with incomplete information? When looking at the messy Excel sheets, where our estimates were kept back then, it was obvious that our numbers were wildly off. Over time we did not get better at it, and the discussions never stopped. In retrospective, not only did we have a chaotic way of estimating, but we also did not realize that estimates and commitments are different things.

A decade later, estimating, or rather how many people and companies approach it leaves me slightly bewildered. By now, agile project management techniques have become mainstream even for corporations. But make no mistake: there is little evidence that agile practices per se outperform more old fashioned approaches to estimation.

I intend to show that we can do better. There is a lot of research concerning estimations in different contexts. We can also draw from more general findings about human decision making from psychology, and behavioral economics. I will focus on what is done most commonly: engineers somehow try to guess how much effort a task will take. Currently, this way of estimating generally outperforms other methods.

Note that this is not a discussion about whether one should estimate efforts at all. There are situations in which estimates do have no value and others in which there is no way around them.

In the first part of this blog post, I will take a closer look at what estimates actually are and how common planning fallacies can be explained by behavioral economics and psychology.

The Nature of Estimates

The first thing that is usually not taken into account about an estimate is that it is not accurately represented by a single number; it is a probability distribution.

Imagine a very regular activity such as taking a tram to work. If this is something you do, you probably just recalled from memory how long a typical trip takes. But this is only a part of the story. Sometimes the tram is slightly late. Rarely, you leave your home just a bit too late and miss the tram you intended to take. There might have been one or two instances where the tram line was out of service because of an accident, and you arrived at work much later than expected.

If you plotted a few hundred observations of your trip duration, it would look like this.

The first takeaway is: when estimating, people tell what they think is the most likely outcome. This maximizes the chance of being correct, but it is often too optimistic. The median effort and the average effort can be significantly higher than the most likely effort. This happens because the probability distributions are right-skewed. In our example, this is pretty obvious. In an extreme case, your trip to work might take an hour instead of 10 minutes, because you had to walk when the tram was out of service. There is no way for this to be symmetrical. Without time travel you can never arrive at work 50 minutes before you left. This observation holds for more irregular and complex tasks such as programming, as well. A task is never completed in less than zero time, while time overruns in principle have no upper limit.

A not so obvious consequence is that if several estimates are added up, the summed estimate becomes more unrealistic. Going back to the tram example, let us estimate how much time you spend on the tram in a year. Assuming 660 trips in each direction per year, if you just multiply the most likely trip duration of 12 minutes, this leaves you with 7920 minutes. But in reality, it is very likely that you will encounter late trams and a few more extreme delays. To get a good estimate you have to base the calculation on the average trip duration, which is 17 minutes. In software development projects it does happen that task estimates are too pessimistic at the individual level (most tasks are overestimated), but the overall estimate for the project still ends up being over-optimistic.

Unlike in the tram example, in software projects, it is not so easy to approximate average estimates. We cannot look into the future. The bad events which cause the long tail of a distribution are often the famous “unknown unknowns”. Our best bet is to look at the past instead. If we have done many estimations in the past, and have an idea of the actual efforts spent, we can approximate the average estimates based on our “most likely” estimates[1]. In longer-lived projects that work in iterations (sprints), estimating how much can be done in the next sprint based on past velocity has a similar effect.

Biases & Heuristics

We now know that the human mind uses heuristics to make decisions and that these result in biases which we often cannot overcome[2]. Being aware of our limitations gives us a chance to shape our environment in a way that prevents bad outcomes when it matters. I will highlight human biases & heuristics which are relevant to task estimation. In addition, I will point out fallacies specific to project planning.

Overoptimism

Overoptimism appears to be universal to some degree. In many respects this is a good thing: problem-solving and creativity require optimism. If you believed your chance of success is close to zero, you would probably just give up.

For task estimation, on the other hand, our goal is to be realistic. Empirical findings suggest that there is no universal over-optimism in this context. A general tendency to be too optimistic only starts to appear for tasks that are estimated at 100 hours of effort or more. The takeaway: make sure the tasks you are estimating are not too big.

Overconfidence

When being asked to predict something with a high level of confidence, your answer would have to be more vague, compared to a prediction at a lower level of confidence. But this is not what happens: people give the same estimates when being asked for a 50% confidence level, and for a 90% confidence level. Our intuition is not equipped for this task: we have little control about the confidence level at which we estimate, and no way to express it in probability percentages. In experiments, it appears that the confidence level at which we are estimating is always in the range of 50 to 70%. All we can do in this regard is to accept our limitations. Just do not ask for predictions with high confidence levels.

Anchoring

If you never have heard of anchoring, just google it. In short, all information in our mind influences our decision-making processes, even if that information is completely unrelated to the question we are answering. In one demonstration of this effect, judges in real cases rolled a pair of dice before sentencing thieves to prison. They ruled for longer sentences when the dice turned up higher numbers[3].

The effect has also been demonstrated for estimations. The important thing to note is: once an anchor is out, our mind cannot ignore it. This means that it is imperative to avoid giving anchors to people in the first place. Here are a few examples of anchors:

- Your customer has told you their estimate for a feature your team is supposed to develop for them. They are not technologically savvy, and may even have an interest in lowering your estimate. Do not tell their estimate to your developers. Even if the numbers are completely off, they will influence your team’s decision.

- The requirements of a story contain words or phrases that can be related to complexity, such as “simple“, “trivial“, “difficult“, “easy“, “complex“.

- You are doing planning poker, but someone does not keep to the rules and tells their estimates before everyone else does.

The Sequence Effect

This one is probably closely related to anchoring. If we are doing several estimates, as is common in a planning session, our estimates are influenced by the estimates we already did. An estimate of a big task after an estimate of a small task will be lower, compared to doing it the other way around. If we are doing many estimates in a row, they will over time converge to the average of all the estimates done before. To limit how much your estimates are distorted by this effect, make sure you do not estimate too many tasks too quickly, and make sure to take a break at least once per hour.

The Format Effect

Instead of asking how much time one needs to complete a given task, one can also ask the inverse question: how many tasks can you complete in a given time? The second variant results in more over-optimism, especially if the time frame is short and if there is a lot of work to be done. Experiments show that time-boxing leads to estimates that are lower by a factor of two.

This is something to look out for when agreeing on a sprint commitment. It is usually more accurate to use the actual velocity from the past few sprints to determine a realistic commitment than asking the team to predict what they can achieve.

The Scaling Fallacy

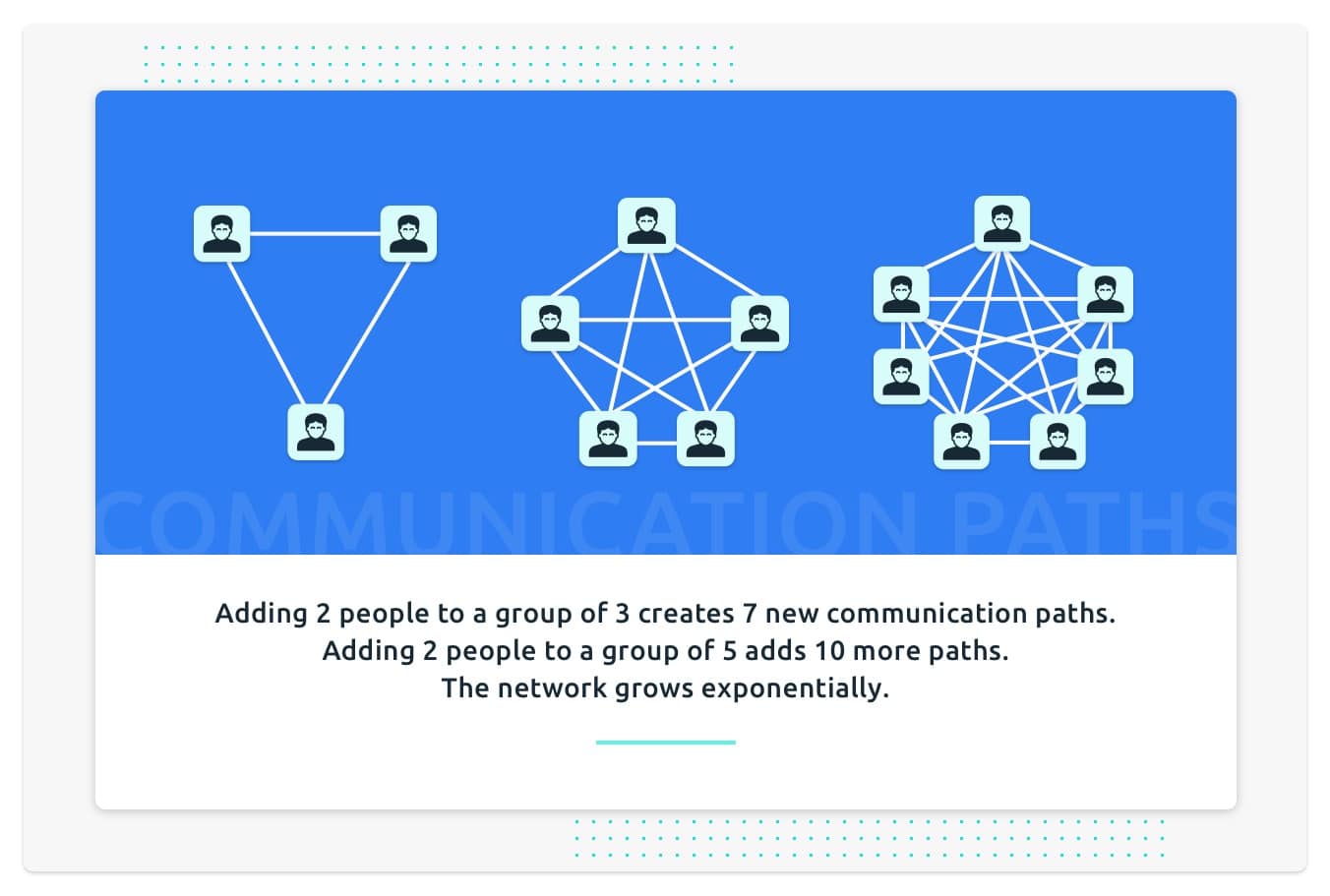

Coordination efforts are hard to grasp, and they grow exponentially with team size. When estimating, you should know the size of the team that will work on the tasks. You also have to expect more over-optimism for larger teams, as people underestimate the cost of coordination.

Stay tuned for the second part of this post. We will take a look at common estimation practices, and discuss which ones are worth implementing and which ones are just a waste of your time.

References

[1]: https://home.simula.no/~magnej/Time_predictions_book/

[2]: https://en.wikipedia.org/wiki/List_of_cognitive_biases

[3]: https://www.thelawproject.com.au/blog/anchoring-bias-in-the-courtroom

Sources for all claims made in the post can be found here: Time Predictions – Understanding and Avoiding Unrealism in Project Planning and Everyday Life

Nikolaj

Nikolaj, Software Engineer at MobiLab.